The Lobster Is Loose and Coming to the Enterprise Next

Why Agentic Systems Change Enterprise Leadership

The lobster is loose.

At first, that sounds like a joke.

A tiny red mascot. A strange little phrase from the weird and wonderful world of personal AI agents. A meme with claws.

But the more I think about it, the more I believe it captures the enterprise AI moment better than almost any polished analyst phrase.

Because the lobster is not just loose in personal workflows anymore.

It is coming to the enterprise next.

And when it gets there, the conversation changes.

This is no longer just about prompts, productivity, or whether someone can summarize a document faster. This is about autonomous capability entering real business systems.

Agents that can read, write, retrieve, route, decide, escalate, trigger, and act.

That means the real question for leaders is no longer:

Can AI help us work faster?

The real question is:

What happens when AI can take action inside the enterprise, and who is actually at the helm?

Personal AI Was the Preview

Peter Steinberger’s recent TED talk about OpenClaw is fascinating because it does not sound like the usual enterprise AI keynote.

It sounds more like a builder describing the moment when software became fun again.

He describes realizing that AI agents could handle the boilerplate, the plumbing, and the tedious parts of software development. Then he says something that should make every enterprise leader pause:

As Peter Steinberger put it in his TED talk transcript, “Agents change who can build things.” (The Singju Post)

That line matters.

Because this is not only about developers.

It is about access.

For years, most meaningful work inside companies required layers of coordination. You needed approvals, technical resources, budget cycles, project intake, roadmap alignment, security reviews, access provisioning, and sometimes a meeting to decide whether you needed another meeting.

The modern enterprise became very good at managing complexity.

But complexity also became a moat around action.

Agentic systems threaten that moat.

Not because they magically solve every business problem, but because they shrink the distance between intention and execution.

A professional can now describe an outcome, delegate pieces of the work to an agent, inspect the result, refine the direction, and move again.

That changes the rhythm of work.

It changes what individuals can do.

And eventually, it changes what organizations must govern.

The Enterprise Will Not Adopt Agents Cleanly

Here is the uncomfortable part.

Most enterprises are going to talk about agentic AI as if it will arrive through a clean, controlled, top-down adoption process.

There will be a strategy deck.

There will be a steering committee.

There will be a pilot.

There will be a framework.

There will be many tasteful diagrams.

There will be many AI heroes celebrated.

But that is probably not how agents will actually enter the enterprise.

They will seep in through the cracks.

They will show up inside SaaS platforms, developer tools, productivity suites, workflow automation tools, security platforms, CRM systems, ticketing systems, analytics tools, browser extensions, personal devices, and employee experiments.

Some will be officially approved.

Some will be quietly used.

Some will be embedded so deeply into existing tools that people will not even think of them as agents.

That is the real enterprise challenge.

The agentic shift will not arrive as one big program.

It will arrive as hundreds of small capabilities that suddenly have permission to act.

Very lobster behavior, honestly.

Jensen Huang Is Right: Every Company Needs a Strategy

When NVIDIA introduced NemoClaw, Jensen Huang reportedly said, “Every company now needs to have an OpenClaw strategy.” He also described OpenClaw as the “operating system for personal AI.” (TechRadar)

That is a big claim.

But I think the deeper point is not about one specific platform.

The real message is that agentic systems are becoming an operating layer.

Not just another app.

Not just another chatbot.

Not just another productivity feature.

An operating layer.

That distinction matters.

Applications help people complete tasks.

Operating layers shape how work happens.

They define what can be done, what can be accessed, what can be automated, what can be delegated, and what can be coordinated.

That is why this moment is bigger than another AI tool launch.

If agents become a new interface for enterprise work, then leaders have to ask a different class of questions.

Not just:

Which AI tools should we buy?

But:

What kind of work should agents be allowed to perform?

Which systems can they touch?

What data can they access?

What decisions require human approval?

How do we monitor agent behavior?

How do we revoke access?

How do we investigate incidents?

How do we prove accountability?

That is where the real enterprise work begins.

The Problem Is Not Intelligence. It Is Action.

The first wave of generative AI forced companies to think about information.

Can we trust the answer?

Can we protect the prompt?

Can we prevent sensitive data from leaking?

Can we stop hallucinations from creating bad decisions?

Those questions still matter.

But agentic AI adds a more serious layer.

Action.

An agent does not just answer a question. It can actually do things.

It can open a ticket.

Update a record.

Send a message.

Query a database.

Trigger a workflow.

Schedule a meeting.

Summarize a security review.

Draft a risk decision.

Call an API.

Spin up another agent.

Follow up while the meeting is still happening.

Peter described a future where a meeting agent could listen, fact-check with sub-agents, and send follow-ups before the meeting even ends. He also described a world where people may have multiple specialized agents that work together securely. (The Singju Post)

That is powerful.

It is also exactly why enterprise leaders need to pay attention.

Because the risk is no longer only that AI gives a bad answer.

The risk is that AI takes a bad action.

Or takes the right action in the wrong system.

Or takes an approved action with unapproved data.

Or completes a task without the business understanding who owns the outcome.

That is not a science fiction concern.

That is a governance concern.

The Agent Is a Non-Human Identity

This is where the enterprise conversation needs to get more serious.

If an agent can act, it needs an identity.

Not a shared account.

Not a vague service token.

Not “Jaime’s AI did it.”

Not a digital intern wearing a fake mustache and borrowing someone’s permissions.

A real enterprise agent needs to be treated as a non-human identity with clear controls.

That means every agent needs:

A defined owner.

A business purpose.

A unique identity.

Scoped permissions.

Approved tools.

Data boundaries.

Logging.

Monitoring.

Lifecycle management.

Periodic access review.

A kill switch.

This is not bureaucracy for the sake of bureaucracy.

This is the foundation for trust.

Enterprises already struggle with human identity, privileged access, service accounts, machine identities, third-party access, and over-permissioned collaboration platforms.

Now we are adding autonomous or semi-autonomous actors into that environment.

If companies do not solve identity for agents, they will not have enterprise AI governance.

They will have vibes in a trench coat.

Data Protection Gets Harder

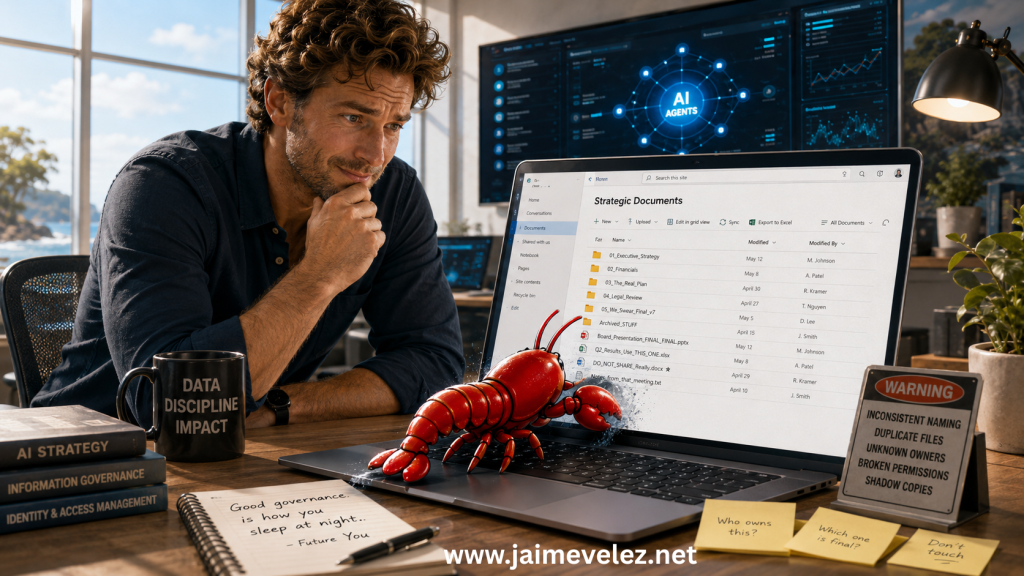

Enterprise leaders also need to understand that agentic systems change the shape of data risk.

Traditional data protection focuses heavily on where data is stored, who can access it, and how it moves.

Agents complicate all three.

An agent may retrieve data from one system, summarize it using another tool, combine it with email context, write the result into a ticket, and notify a stakeholder in chat.

Each step may look reasonable in isolation.

The full chain may create a risk no one intended.

That is the enterprise challenge.

The danger is not only data leakage.

It is data movement without clear purpose.

Data combination without context.

Automation without review.

Workflow execution without accountability.

This is especially important in regulated environments, cybersecurity, finance, healthcare, legal, and any company where sensitive information lives inside messy collaboration systems.

And let’s be honest.

That is most companies.

The lobster is not walking into a clean lab.

It is walking into SharePoint.

Good luck, little buddy.

NemoClaw Points to the Real Enterprise Need

This is why NVIDIA’s NemoClaw announcement matters as a signal, even if leaders are not using that specific technology yet.

The market is starting to recognize that enterprise agent adoption depends on security, privacy, trust, and guardrails. TechRadar reported that NemoClaw was introduced to make OpenClaw safer and more trustworthy for business use, with security and privacy tools intended to keep agents within bounds. (TechRadar)

That is the right direction.

Because enterprises do not need agents that can do everything.

Enterprises need agents that can do the right things, under the right controls, for the right reasons.

A powerful agent without boundaries is not an enterprise asset.

It is an incident waiting for a ticket number.

The future is not unrestricted autonomy.

The future is governed autonomy.

Human in the Loop Is Not Enough

This is where I think many enterprise AI conversations are still using the wrong mental model.

We keep saying “human in the loop.”

I understand why.

It sounds safe.

It suggests oversight.

It makes everyone feel like someone is watching the machine.

But too often, “human in the loop” becomes a rubber stamp at the end of an automated process that was poorly designed in the first place.

That is not enough for agentic systems.

We need Human at the Helm.

The difference matters.

Human in the loop says:

Let the system act, then let a person approve or correct it.

Human at the Helm says:

A person defines the mission, boundaries, authority, escalation paths, and success criteria before the system acts.

That is the leadership model agentic AI requires.

Humans should not merely approve outputs.

Humans should design the operating environment.

They should decide what the agent is allowed to do, what it is not allowed to do, what requires review, what must be logged, what must be reversible, and what outcomes actually matter.

The future of enterprise AI will not be won by companies that simply add humans at the end.

It will be won by companies that put humans in command of the system design.

Leaders Need an Agentic Operating Model

My advice to enterprise leaders right now is to not focus so much on tools and models. Those change weekly.

Do not start with:

Which agent platform should we buy?

Start with:

What work should become agentic? What work should always remain human?

That is a different conversation.

Some workflows are good candidates for agents.

Others are not ready.

Some processes are too broken.

Some data environments are too messy.

Some approval chains are too unclear.

Some teams cannot even explain how the work gets done today, which means they definitely should not automate it tomorrow.

Before enterprises scale agentic systems, they need an operating model.

At minimum, that model should answer five questions:

- What outcomes are agents allowed to pursue?

- What systems and data can they access?

- What decisions require human judgment?

- How will agent actions be logged and reviewed?

- Who is accountable when something goes wrong?

Those questions are not anti-innovation.

They are what make innovation survivable.

The Leadership Shift

Agentic systems will force leaders to change how they think about work.

For decades, enterprise work has been organized around roles, processes, systems, and approvals.

Agents introduce a new unit of work.

Delegated capability.

That means leaders will increasingly manage not only people and platforms, but also fleets of digital actors performing bounded tasks across the organization.

That sounds futuristic.

It is closer than most people think.

The leaders who adapt will not be the ones who chase every shiny new AI tool.

They will be the ones who understand how to redesign workflows, clarify decision rights, secure non-human identities, and keep human judgment at the center.

That is the real leadership opportunity.

Not replacing people.

Not blindly automating work.

Not pretending governance can wait until later.

The opportunity is to build enterprises where humans define the work that matters, and agents help reduce the friction around getting it done.

The Lobster Is Not Going Back

Near the end of his TED talk, Peter said, “The lobster is loose, and it’s not going back into the tank.” (The Singju Post)

I believe 100% that he is right.

Agentic AI is not going away.

The capabilities are too useful.

The access is too broad.

The momentum is too strong.

The enterprise can slow it down.

It can govern it.

It can shape it.

It can secure it.

But it cannot pretend this is just another chatbot wave.

Let me be clear: “the lobster” is not just OpenClaw. It has become a symbol for this larger transformational moment, where agentic AI is moving from playful experiment to enterprise reality.

The lobster is loose. 🦞

And now it is coming to the enterprise.

The question is not whether leaders can put it back in the tank.

They cannot.

The question is whether they can build the right environment for it to operate safely, usefully, and responsibly.

Give it an identity.

Give it boundaries.

Give it a purpose.

Give it oversight.

Give it a kill switch.

And above all, make sure there is still a human at the helm.

Because the future of enterprise AI will not belong to the companies with the most agents.

It will belong to the companies that know how to lead them.